Highly Efficient Multimodal Model for Enterprise-Ready Agents Without Surprise Bills

The recently launched AibleClaw is an enterprise solution for long-running agents with built-in governance and guardrails built with the NVIDIA OpenShell runtime for secure execution. It brings consistency, control, and cost-containment to the creativity and capability of claws.

Our customers want to quickly prototype long-running agents (Claws) in air-gapped environments where they have no surprise per-token bills. Claws can consume significantly more tokens than traditional chat interactions as they often reason for long lengths of time, start off sub agents that require tokens as well, and wake-up to perform tasks on an ongoing basis.

Aible enables our users to run all aspects of the agent including the models within air-gapped NVIDIA DGX Spark devices so that there are no surprise bills. For example, Shay Wilson, CIO at State of Alaska Legislative Affairs Agency wrote, “We built our first Aible AI agent running on NVIDIA DGX Spark in 5 minutes. Running on our DGX Spark in a secure air-gapped environment allows me to prototype confidently, eliminate surprise spending, and scale to broader use cases only when we’re ready.”

Central to the launch of AibleClaw was the NVIDIA Nemotron 3 Super model, well suited for constrained reasoning and planning decisions with AibleClaws, and able to run on the NVIDIA DGX Spark desktop supercomputer. Additionally, the OpenShell runtime hosts each agent in its own, isolated sandbox and enforces security and privacy policies at the infrastructure layer. But, our customers wanted to also analyze audio and video files. For example, one customer wanted to transcribe minutes from meetings that needed to remain private. Another wanted to find key insights in video recordings. Running multiple models for different modalities would not only take up more space but also introduce other problems like latency and fragmented context leading to a poor user experience.

Today, Aible furthers its leadership in delivering enterprise AI agents and claws, by adopting the newly released NVIDIA Nemotron 3 Nano Omni model which supports all of the use cases such as agentic analytics and document processing that we supported with Nemotron 3 Super, but adds in image, audio, and video support with a single model that can run air-gapped on the NVIDIA DGX Spark. Note that agents that process images, audio, and video require even more tokens than agents that only process text. Especially at a time where token consumption is top of mind, and model providers are increasing their consumption based pricing, being able to run the NVIDIA Nemotron 3 Nano Omni on air-gapped hardware completely addresses concerns regarding surprise bills.

Why NVIDIA Nemotron 3 Nano Omni Matters

Nemotron 3 Nano Omni is the highest-efficiency open multimodal model available, with leading accuracy across its class. But what makes it essential for enterprise agents is what it can actually process: text, image, audio, and video as input, and reason across all of them.

This is a fundamental unlock because real enterprise operations don't live in spreadsheets alone. Warehouse floors and retail aisles have video feeds. Inspection workflows involve photos and video. Frontline workers communicate verbally and via text. Until now however, building agents that could reason across these modalities required stitching together multiple models with significant complexity and cost. Nemotron 3 Nano Omni handles this natively in a single model, and it does so efficiently enough to run on edge devices like the NVIDIA DGX Spark.

For Aible, this means long-running agents can now watch, listen, read, and reason, all within the governed, secure architecture our enterprise customers require

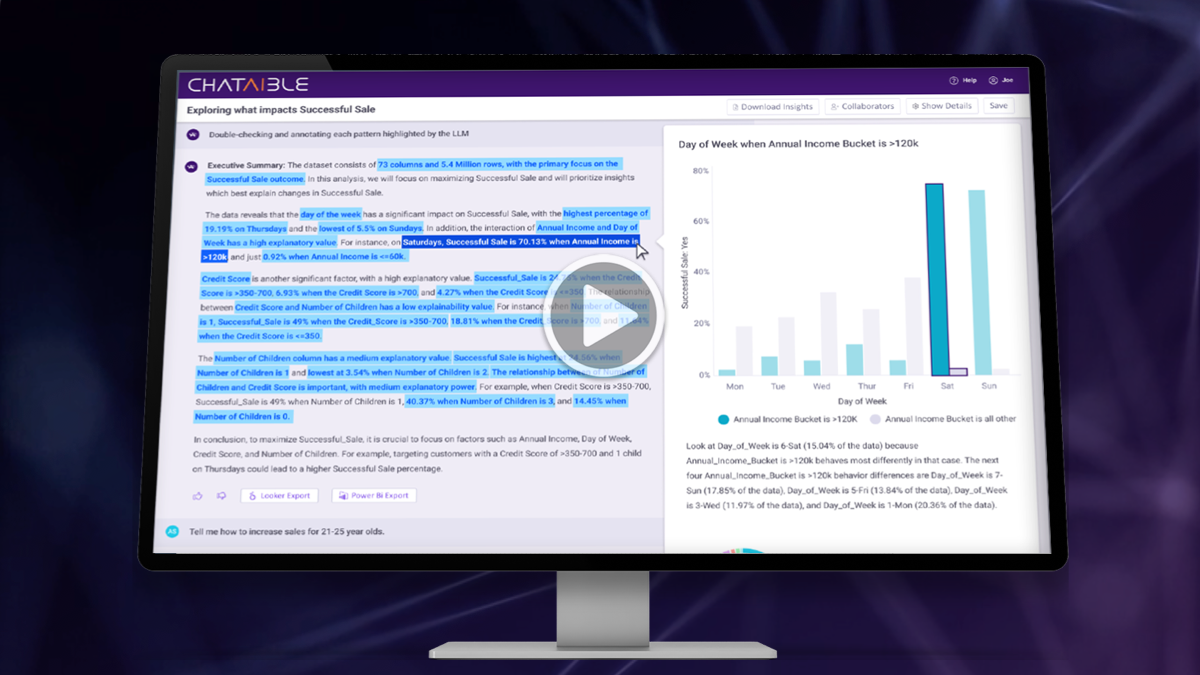

See it in action: NVIDIA Nemotron 3 Nano Omni & Aible

Users have previously used Aible for a wide variety of multimedia use cases - from summarizing videos for insights that may lead to better sales targeting, to detecting lost baggage at terminals, to even the State of Nebraska using it to detect pigs that are acting aggressively (e.g. biting tails) as an early indicator of disease in the animal population. In each of these cases we needed to use two different models to complete these use cases. A video model to summarize the key features of the video, and then a reasoning language model to make decisions or take actions based on what was in the video. Nemotron 3 Nano Omni offered the potential opportunity to do all of these steps with a single model using fewer overall tokens and without the loss of context when the first model just summarizes the video and then the second model acts upon the summary.

We tested the Nemotron 3 Nano Omni for a specific use case around Personal Protective Equipment (PPE) compliance. This is a real problem in worksites such as construction as workers can get seriously hurt if they don’t properly use equipment such as hardhats, safety vests, etc. Employers also have significant legal and compliance risks on top of the employee safety concerns. But, it is impossible for people to continuously monitor such video feeds to detect PPE compliance. This is where autonomous long running agents can create significant value.

The following video shows the Nemotron 3 Nano Omni in action for this use case.

In the video an user first chats with Nemotron 3 Nano Omni to understand what is happening with a video of a worksite. Then they use Aible to easily generate a long-running agent (Claw) that uses Nemotron 3 Nano Omni that monitors such videos on an ongoing basis and notifies users of violations via Slack. They can even adjust / train the agent by providing simple feedback on the output of the agent. No technical skills are required to build or train the agent.

Driving Efficiency, with Accuracy

At GTC 2025, Aible demonstrated that a smaller, 8 billion parameter model, with just $5 of post-training, could outperform much larger models on specific enterprise tasks like asking questions on structured data with enterprise-specific terminologies. This happens because generalized large language models have never seen the unique terminologies used at a specific enterprise, while the post-trained small model has been trained to specifically understand their unique data.

Nemotron 3 Nano Omni extends this efficiency advantage into the multimodal domain. Instead of running separate models for vision, audio, and text, each with their own compute cost and latency, enterprises get a single model that reasons across all modalities. For AibleClaw, this means fewer models to manage, lower infrastructure cost, and faster agent response times, without sacrificing the accuracy that enterprise decisions demand. Each tool the agent leverages remains completely deterministic and logged.

Open Matters for the Enterprise

Nemotron 3 Nano Omni is truly open, not just open-weight. We explained extensively why this matters in our Nemotron 3 Super blog, and the same principles apply here. Enterprise teams who work with Aible have expressed to us clearly: they want to inspect training data to mitigate legal risk, confidence that the model will remain open and supported, and the ability to fine-tune on their own domain-specific data. With Nemotron 3 Nano Omni, all of this is possible. Your data stays with you. The model runs inside your environment. And you can customize it to your exact needs.

Fine-Tuning with the NVIDIA NeMo Stack

Aible supports the full NVIDIA NeMo stack. With NeMo microservices including NeMo Customizer, NeMo Evaluator, and NeMo Data Designer, enterprises can soon fine-tune Nemotron 3 Nano Omni and deploy it securely within Aible. We are working closely with NVIDIA to enable end-to-end automated business-user-led post-training of Nemotron 3 Nano Omni leveraging the NVIDIA data flywheel and expect that it will be in production soon.

This follows the same approach we pioneered with Nemotron 3 Super: start with larger models to bootstrap the training process, collect fine-grained user feedback through our "train AI agents like interns, not pets" methodology, and use that feedback to post-train progressively smaller models until you find the smallest model that meets accuracy requirements for production. The multimodal capabilities of Nemotron 3 Nano Omni now extend this pipeline to visual and audio inputs, meaning enterprises can post-train models that understand not just their data, but their physical operating environments.

All of this runs under the same Aible governance controls: approved plans, approved tools, approved data sources, deterministic logging, and end-to-end security with NVIDIA NeMo Guardrails and Cisco AI Defense.

Fine-Tuning with the NVIDIA NeMo Stack

Aible supports the full NVIDIA NeMo stack. With NeMo microservices including NeMo Customizer, NeMo Evaluator, and NeMo Data Designer, enterprises can soon fine-tune Nemotron 3 Nano Omni and deploy it securely within Aible. We are working closely with NVIDIA to enable end-to-end automated business-user-led post-training of Nemotron 3 Nano Omni leveraging the NVIDIA data flywheel and expect that it will be in production soon.

Get Started

To see Nemotron 3 Nano Omni running with Aible, request a demo here.

Relevant News

NVIDIA Announcement: NVIDIA Launches Nemotron 3 Nano Omni Model

.png?width=1079&height=603&name=image%20(5).png)